Jump to:

- Part 1 – Introduction to hot-warm-cold-frozen architecture

- Part 2 – Building an experimental multi-tier architecture with docker-compose

- Part 3 – Demonstrating how a multi-tier architecture works (manually migrating data)

- Part 4 – Migrating data through tiers automatically

- Part 5 – How to optimize your cluster architecture to reduce Elasticsearch costs

What this guide will cover

In this complete guide we’re going to introduce the concept and explain how to implement a multi-tier Elasticsearch cluster architecture. We’ll first talk about what a multi-tier architecture is, its advantages, use cases and how Elasticsearch +7.10 implements it. We’ll take a look at data tiers, setting up the cluster nodes and index allocation.

In the second part of this guide we’ll present an experimental stack to easily demonstrate some concepts, which can also be used for further experimentation. We’ll be configuring a couple of nodes as explained in the first part.

In the third part we’ll experiment with our stack. We’ll start by taking a look at how Elasticsearch actually implements multi-tier architecture. You’ll see that once you have your nodes set up it is only a matter of managing the lifecycle of your data throughout the tiers of your architecture. We’ll first do so manually, step by step.

In the fourth part of this series we’ll check out how to have our data migrated automatically, given some trigger conditions.

The practical part of this series we’ll be achieved with the help of a docker-compose stack, so make sure you have that installed in your system with you want to follow along.

Part 1 – Introduction to hot-warm-cold-frozen architecture

A multi-tier architecture allows for better organization of resources to fit various search use cases. Cluster nodes can be organized such that there is a pool of nodes with fast hardware profiles to take care of the more frequently searched data and then different profiles, each one with a less powerful hardware profile, to take care of search requests that will ask for data not so frequently searched. Other than hardware profile, the number of nodes in each tier could also potentially be different from one another, meaning your most frequently queried data could be stored in a tier that is scaled to have the most number of nodes and those nodes will be provisioned with the fastest profile.

It’s not required to have a different number of nodes or different hardware profile for each tier, but this is something you’ll typically want to do when implementing this type of architecture.

In Elasticsearch’s multi-tier implementation, the tiers are named hot, warm, cold and frozen. And, throughout its lifecycle, data traverses them in that order. It’s not required to implement all of them, only the hot tier is present in most use cases, since it serves as an entry point. The diagram below shows an example of multi-tier architecture.

Motivation and use cases

There are lots of use cases where data gets searched even less often as time goes by. Time series data such as logs, metrics and transactions in general are a good example of data that is of less interest to users as the days/weeks/years go by.

Any time series use case is a serious candidate for and would probably benefit from a hot/warm/cold/frozen architecture, but here are some examples:

- Financial transactions (balance, credit card, stock exchange, …)

- Observability (metrics, logs, traces, …)

- E-commerce user history (orders, website telemetry, support tickets, …)

Implementing a multi-tier architecture in Elasticsearch +7.10

Data tiers

Elasticsearch 7.10 introduced the concept of data tiers implemented as node roles. This is since then is the proper and recommended way of implementing a hot/warm/cold/frozen architecture.

A data tier is a collection of nodes that share some common characteristics, mainly related to hardware profile and resource availability. There are currently five node roles representing different data tiers:

- Content tier: generally holds data for which the content, unlike time series data, will be kept relatively constant over time, with no need to migrate documents over to other tiers. Usually optimized for query performance and in most cases not as indexing-intensive as time series data like logs and metrics would be (though this is definitely a tier where documents will be indexed, just not at the same rate as in a high-frequency time series index). It’s a required tier, since system indices and any other indices not part of a data stream will be indexed through it. If you consider an e-commerce use case the list of products could perhaps be indexed in an index kept in this tier.

- Hot tier: considered the entry point for time series data. Will hold the most recent indexed and most frequently searched data. Nodes will typically be configured with fast hardware profiles, especially when it comes to IO. Also required, since data streams will index new data in this tier. When users of an e-commerce website consult their order history they are probably more interested in orders from the last month or so than in orders from last year, for example.

- Warm tier: once data in the hot tier gets searched less frequently, it can be moved to a less expensive hardware profile. This will depend on the use case, but once defined the point in the data lifecycle when data gets searched less frequently, this can be configured in a policy that will migrate it to the warm tier. Data in this tier can still be updated, but less frequently than in the hot tier. Considering the e-commerce example, the user might not be very interested anymore in purchases that happened, say, prior to three months ago.

- Cold tier: documents that will likely not be updated anymore are ready to be moved to the cold tier. Here data can still be searched, but it happens even less frequently than in the previous tier.When migrating data to this tier it is an opportunity to shrink and compress it, in order to save disk space. Also, instead of having replicas, data in this tier could rely on searchable snapshots in order to have its resiliency assured. User’s order history from last year and before could be a candidate to reside in this tier, if we consider the e-commerce example.

- Frozen tier: finally, the cold tier is where data will likely rest for the rest of its days, it’s where the lifecycle comes to an end. In this case the documents are not being queried anymore (or it happens rarely). This tier is implemented with partially mounted indices loading data from snapshots, which reduces storage needs and overall costs with processing. It’s recommended to use dedicated nodes for this tier.

Setting up the nodes

In order to implement a multi-tier architecture, the first step is to configure the node role of each node in your cluster formation. You do that by setting the node.role attribute in the elasticsearch.yml configuration file. Here are the possible values when it comes to data node roles: data (this is the generic dedicated data node, no tiers implementation), data_content, data_hot. data_warm, data_cold and data_frozen.

Some things to keep in mind here:

- In a multi-tier implementation, a node can belong to multiple tiers.

- A node that has one of the specialized data roles cannot have the generic data role.

- It’s required to have at least one node configured for the content and hot roles.

Here’s an example on how you could configure a multi-tier data architecture by configuring the node.roles attribute in the elasticsearch.yml of a group of only three nodes:

* Other required configuration attributes are suppressed. Also, the focus here is the data layer of the cluster, so nodes with other roles, such as master, were also suppressed.

data_node_01:

node.roles: [ data_content, data_hot ]

data_node_02:

node.roles: [ data_warm, data_cold ]

data_node_03:

node.roles: [ data_frozen ]

Index allocation

When you create an index, Elasticsearch will give preference to allocate it in the content tier. It does this by looking at the index.routing.allocation.include._tier_preference index setting, which has data_content as its default value. On the other hand, when Elasticsearch creates an index as part of the data stream abstraction, this index will be allocated in the hot tier.

So, this is the first way you can influence where your index gets allocated. You can choose the data tier by either:

- Overriding the automatic tier-based allocation by specifying shard allocation filtering settings in your index or in the respective index template:

- Set index.routing.allocation.include._tier_preference to null, this way opting out the default tier-based allocation (data tier roles will be ignored during allocation).

Most would prefer to have something in place that will assure that data will migrate on its own from tier to tier as it traverses its lifecycle.

Here’s where Index-Lifecycle Management comes in handy. ILM helps you manage the lifecycle of your indices by defining policies that will apply actions whenever a condition that triggers a phase transition is reached. The condition can be the index’s age, the amount of documents it holds or even its size.

Among many actions that could be configured to execute when the phase transition is triggered, one of interest when it comes to implementing the multi-tier architecture is the Migrate action. This action will change the index.routing.allocation.include._tier_preference index setting with a value that corresponds to the tier that lifecycle phase represents, so the index gets migrated to the desired data tier.

Some important aspects on how this action works are the following:

- This action is automatically injected in the warm and cold phases.

- Naturally it makes no sense to have the Migrate action for the hot phase, since there’s no higher tier than the hot one. Data gets indexed in the hot tier automatically.

- In the warm phase, the Migrate action will set the index’s tier preference setting to both data_warm (preferably) and data_hot (as a fallback). This is necessary because there may be no node configured with the data_warm role, but it is required to have at least one node configured with the data_hot role, so in this case the index will be kept in the hot tier.

- Same case for the cold phase. Since there could be no node configured neither as cold, nor as warm, the Migrate action sets the index’s tier preference setting to data_cold (preferably), data_warm (first fallback, if there’s no node set with the cold role) and data_hot (second fallback, if there’s no node set with neither cold nor warm roles).

- Lastly, also for the frozen phase the Migrate action orders the preferably node roles in such a way to always fallback to the previous tier (if present), having the hot tier as the last possible fallback. Here is the tier order preference for this phase: data_frozen, data_cold, data_warm and lastly data_hot.

Part 2 – Building an experimental multi-tier architecture with docker-compose

In order to experiment with a multi-tier architecture, we can bring up a docker-compose stack that will initialize three Elasticsearch containers and one Kibana. Each Elasticsearch container will be configured with appropriate node roles so we can simulate a multi-tier with nodes playing different roles, as follow:

- es01: master, data_content, data_hot

- es02: master, data_warm

- es03: master, data_cold

- es04: data_frozen

*If you don’t have Docker or docker-compose installed check the official documentation on how to get it in order to follow this tutorial. Another option would be to run the services manually, which would still work fine for the purpose of this example. The important thing is to assure your services are set up considering the relevant configuration parameters (node.roles).

Save the following docker-compose specification in a docker-compose.yml file:

version: '2.2'

services:

es01:

image: docker.elastic.co/elasticsearch/elasticsearch:7.15.0

container_name: es01

environment:

- node.name=es01

- cluster.name=es-docker-cluster

- discovery.seed_hosts=es02,es03,es04

- cluster.initial_master_nodes=es01,es02,es03,es04

- node.roles=master,data_content,data_hot

- path.repo=/usr/share/elasticsearch/snapshots

#- bootstrap.memory_lock=true

- "ES_JAVA_OPTS=-Xms512m -Xmx512m"

ulimits:

memlock:

soft: -1

hard: -1

mem_limit: 1Gb

volumes:

- hot_data:/usr/share/elasticsearch/data

- snapshots:/usr/share/elasticsearch/snapshots

ports:

- 9200:9200

networks:

- elastic

es02:

image: docker.elastic.co/elasticsearch/elasticsearch:7.15.0

container_name: es02

environment:

- node.name=es02

- cluster.name=es-docker-cluster

- discovery.seed_hosts=es01,es03,es04

- cluster.initial_master_nodes=es01,es02,es03,es04

- node.roles=master,data_warm

- path.repo=/usr/share/elasticsearch/snapshots

#- bootstrap.memory_lock=true

- "ES_JAVA_OPTS=-Xms512m -Xmx512m"

ulimits:

memlock:

soft: -1

hard: -1

mem_limit: 1Gb

volumes:

- warm_data:/usr/share/elasticsearch/data

- snapshots:/usr/share/elasticsearch/snapshots

networks:

- elastic

es03:

image: docker.elastic.co/elasticsearch/elasticsearch:7.15.0

container_name: es03

environment:

- node.name=es03

- cluster.name=es-docker-cluster

- discovery.seed_hosts=es01,es02,es04

- cluster.initial_master_nodes=es01,es02,es03,es04

- node.roles=master,data_cold

- path.repo=/usr/share/elasticsearch/snapshots

#- bootstrap.memory_lock=true

- "ES_JAVA_OPTS=-Xms512m -Xmx512m"

ulimits:

memlock:

soft: -1

hard: -1

mem_limit: 1Gb

volumes:

- cold_data:/usr/share/elasticsearch/data

- snapshots:/usr/share/elasticsearch/snapshots

networks:

- elastic

es04:

image: docker.elastic.co/elasticsearch/elasticsearch:7.15.0

container_name: es04

environment:

- node.name=es04

- cluster.name=es-docker-cluster

- discovery.seed_hosts=es01,es02,es03

- cluster.initial_master_nodes=es01,es02,es03,es04

- node.roles=master,data_frozen

- path.repo=/usr/share/elasticsearch/snapshots

- xpack.searchable.snapshot.shared_cache.size=10%

#- bootstrap.memory_lock=true

- "ES_JAVA_OPTS=-Xms512m -Xmx512m"

ulimits:

memlock:

soft: -1

hard: -1

mem_limit: 1Gb

volumes:

- frozen_data:/usr/share/elasticsearch/data

- snapshots:/usr/share/elasticsearch/snapshots

networks:

- elastic

kib01:

image: docker.elastic.co/kibana/kibana:7.15.0

container_name: kib01

ports:

- 5601:5601

environment:

ELASTICSEARCH_URL: http://es01:9200

ELASTICSEARCH_HOSTS: '["http://es01:9200","http://es02:9200","http://es03:9200","http://es04:9200"]'

mem_limit: 1Gb

networks:

- elastic

volumes:

hot_data:

driver: local

warm_data:

driver: local

cold_data:

driver: local

frozen_data:

driver: local

snapshots:

driver: local

networks:

elastic:

driver: bridgeNotice that for each Elasticsearch container there is an environment variable set that defines which role the Elasticsearch service should play. In order not to have to bring up dedicated master eligible nodes (services) all services play a data role as well as the master role. In a real scenario you’d probably want to have dedicated master eligible nodes and separated data nodes (which will definitely have a stronger hardware resources profile in order to fulfill search queries).

This docker-compose file is based on the one suggested by Elastic in its official documentation. Other than node roles, the only thing added to it was a volume to hold snapshots, which was then included and mounted in each Elasticsearch service, along with the path.repo setting pointing to it. We’ll need this in order to take snapshots and search them in our frozen tier.

To bring the stack up you just need to be in the same directory as the docker-compose.yml file and run the following command:

docker-compose up -d Creating network "hwc_elastic" with driver "bridge" Creating volume "hwc_hot_data" with local driver Creating volume "hwc_warm_data" with local driver Creating volume "hwc_cold_data" with local driver Creating volume "hwc_frozen_data" with local driver Creating volume "hwc_snapshots" with local driver Creating es03 ... done Creating es01 ... done Creating kib01 ... done Creating es04 ... done Creating es02 ... done

Once the stack is up, check to see if all containers are up and running. Run the following command and check if you have a similar output:

docker-compose ps Name Command State Ports --------------------------------------------------------------------------------------------------- es01 /bin/tini -- /usr/local/bi ... Up 0.0.0.0:9200->9200/tcp,:::9200->9200/tcp, 9300/tcp es02 /bin/tini -- /usr/local/bi ... Up 9200/tcp, 9300/tcp es03 /bin/tini -- /usr/local/bi ... Up 9200/tcp, 9300/tcp es04 /bin/tini -- /usr/local/bi ... Up 9200/tcp, 9300/tcp kib01 /bin/tini -- /usr/local/bi ... Up 0.0.0.0:5601->5601/tcp,:::5601->5601/tcp

Here is a diagram showing what our cluster looks like:

One extra step we have to take is to change ownership of the snapshots directory. Docker-compose will mount it as root, but since the Elasticsearch service runs as the elasticsearch user it won’t have access to write in that directory. So, everytime you bring up the stack for the first time you’ll have to run the following command:

for service in es01 es02 es03 es04; do docker exec $service chown elasticsearch:root /usr/share/elasticsearch/snapshots/; done

If everything went fine, you’ll be able to access a Kibana instance by going to the following url in your browser:

http://localhost:5601

Setting up searchable snapshots and a repository

With our stack up and running we’re almost ready to experiment with the multi-tier architecture. But first we’ll need to set up a couple of things. So, you can now go to Kibana, access the Dev Tools app and we’ll get done with it in no time.

The first thing we need to take care of is starting the trial license, which is necessary because we are going to use the searchable snapshots feature in the frozen tier of our multi-tier architecture and this is not included in the basic license. Run the following command in order to start a trial period of 30 days that will give you access to all Elasticsearch premium features:

POST _license/start_trial?acknowledge=true

Response:

{

"acknowledged" : true,

"trial_was_started" : true,

"type" : "trial"

}If you prefer, you could also run the same command using curl from your terminal:

curl -X POST http://localhost:9200/_license/start_trial?acknowledge=true&pretty

The last thing we need before we dive in is to register a snapshot repository pointing to the path.repo we have configured for our nodes. Run the following command:

PUT _snapshot/orders-snapshots-repository

{

"type": "fs",

"settings": {

"location": "/usr/share/elasticsearch/snapshots",

"compress": true

}

}

Response:

{

"acknowledged" : true

}*If you get a status 500 response saying that the path is not accessible, go back to the previous section and make sure that you’ve run the command that changes ownership of the snapshots directory inside the containers.

Part 3 – Demonstrating how a multi-tier architecture works (manually migrating data)

Now we can start experimenting with the multi-tier architecture we created. Our main goal is to watch data migrate from tier to tier and we’ll do that manually in this part and automatically in Part 4.

You can verify that all of our nodes have their roles properly configured by issuing the following command in Dev Tools:

GET _cat/nodes?v&h=name,node.role&s=name Response: name node.role es01 hms es02 mw es03 cm es04 fm

You’ll get a response listing our nodes and all the roles each of them play in our cluster:

- m: master node

- s: content tier

- h: hot data tier

- w: warm data tier

- c: cold data tier

- f: frozen data tier

Migrating data through tiers manually (the hard way)

Here we’ll make use of shard allocation filtering to influence where (meaning in which nodes) the cluster should allocate our indices.

Shard allocation filtering works by setting index’s attributes that define the routing strategy Elasticsearch will follow when allocating shards. In a nutshell, you basically define node attributes in the elasticsearch.yml configuration file for each node in your cluster and then all you have to do is to configure the routing policy of your indices, either manually or through an index template, to route that index’s shards to nodes that include/exclude/require a certain value for that attribute.

Since Elasticsearch 7.10 we can define data tiers as custom node roles and that’s what we did for each node (service) in our docker-compose stack. With that, we can now set a routing policy that uses those values for a hypothetical index. We do that by setting a special attribute called index.routing.allocation.include._tier_preference. This is an internal attribute that Elasticsearch will consider when deciding where to allocate indices. By default its value is set to data_content for indices in general. If the index was created through a data stream, then the default value for this attribute is set to data_hot. The possible values for this attribute are either a data tier role name (data_content, data_hot, data_warm, data_cold or data_frozen) or null, if you want Elasticsearch to completely ignore data tier roles when allocating indices.

With that in mind, let’s experiment a little. We’ll simulate a scenario where we keep the user’s order history for an hypothetical e-commerce website in Elasticsearch indices. We create a new index each day to store the orders for that day. We want our indices to migrate from tier to tier accordingly to the following policy:

- Orders placed today: keep them in hot tier

- Orders placed in the last 30 days (but not today): migrate them to warm tier

- Orders placed in the last 365 days (before this month): migrate them to cold tier

- Orders older than 365 days: snapshot, delete and migrate them to frozen tier

For this example scenario, we’re considering the current date is the 20th October 2021.

To make things easier we’ll define an index template that will be applied to every order index. This only maps a couple of fields and sets the number of shards and replicas to respectively 1 and 0 so we don’t have UNASSIGNED shards, since our example architecture is definitely not able to provide high-availability (but that’s ok, since it’s aimed only to be a learning sandbox and not a production environment). Run the following command in order to create the index template:

PUT _index_template/orders

{

"index_patterns": ["orders-*"],

"template": {

"settings": {

"number_of_shards": 1,

"number_of_replicas": 0

},

"mappings": {

"properties": {

"client_id": {

"type": "integer"

},

"created_at": {

"type": "date"

}

}

}

}

}We can now create some indices that will represent different moments in time of our e-commerce orders history. Run all the commands below to create the indices we’ll need to move on:

# Today PUT orders-20211020 # Last 30 days PUT orders-20211010 # Last 365 days PUT orders-20210601 # Older than 365 days PUT orders-20200601

We don’t even need data on those indices to simulate a multi-tier data migration workflow, but let’s assume those indices hold data that represent the user’s orders in each day the index’s name indicates.

Before we move on, let’s check where Elasticsearch decided to allocate the shards for those four indices:

GET _cat/shards/orders*?v&s=index&h=index,node Response: index node orders-20200601 es01 orders-20210601 es01 orders-20211010 es01 orders-20211020 es01

You can see that they were all allocated to node es01, as they should be, because that is the node we’ve configured to act as our content tier and, for indices that are not part of data streams, that is the preferred data tier for Elasticsearch to allocate indices to.

So far so good, but now let’s apply our defined multi-tier allocation policy. We are doing it manually here in order to explain how it works, but in the next section we’ll take a look at how to have all of this taken care of automatically by Elasticsearch.

To migrate our indices to the proper data tiers is just a matter of setting the index’s routing accordingly. You can imagine you have a background job that will take care of this daily. This job would checkout the list of indices and then set the shard allocation routing accordingly to the date present in the index’s name and the current date. In the end, this job would have issued the following commands to reorganize the indices in our multi-tier architecture:

PUT orders-20211020/_settings

{

"index.routing.allocation.include._tier_preference": "data_hot"

}

PUT orders-20211010/_settings

{

"index.routing.allocation.include._tier_preference": "data_warm"

}

PUT orders-20210601/_settings

{

"index.routing.allocation.include._tier_preference": "data_cold"

}Notice that we didn’t issue a command to move the orders-20200601 index (last year’s orders) to the frozen tier. We’ll do that in a moment. Let’s first check the shard allocation after those commands were processed by the cluster, so we can verify that they were all moved to nodes with the expected roles:

GET _cat/shards/orders*?v&s=index&h=index,node Response: index node orders-20200601 es01 orders-20210601 es03 orders-20211010 es02 orders-20211020 es01

The orders-20211020, orders-20211010 and orders-20210601 were moved respectively to nodes es01, es02 and es03, that respectively represent the hot, warm and cold data tiers, exactly as we intended.

So, what’s the deal with the index that represents the orders placed last year? Well, the allocation policy we defined states that it should be moved to the frozen tier, since it holds data that the user rarely wants to query. Let’s see what happens if we try to change the routing allocation setting of that index to move the shards to our frozen tier:

PUT orders-20200601/_settings

{

"index.routing.allocation.include._tier_preference": "data_frozen"

}

Response:

{

"error" : {

"root_cause" : [

{

"type" : "illegal_argument_exception",

"reason" : "[data_frozen] tier can only be used for partial searchable snapshots"

}

],

"type" : "illegal_argument_exception",

"reason" : "[data_frozen] tier can only be used for partial searchable snapshots"

},

"status" : 400

}As you can see, in Elasticsearch’s implementation of a multi-tier architecture the frozen tier is reserved exclusively for partial searchable snapshots, not for regular indices, so we cannot move the index there.

Elasticsearch 7.10 introduced searchable snapshots as part of its Enterprise subscription. Searchable snapshots allow you to keep your less frequently queried data in a low cost object store (such as AWS S3) in the form of snapshots, which will be brought back online only when necessary.

In order to search snapshots, you first need to mount them as indices. There are two ways of doing that:

- Fully mounted index: a full copy of the snapshotted index will be loaded. This is the option ILM uses for the hot and cold phases.

- Partially mounted index: loads only parts of the snapshotted index onto a local cache that is shared among nodes that collaborate in the frozen tier. If the search requires data that is not in the cache, it will be fetched from the snapshot. This is the option ILM uses for the frozen phase.

That’s an opportunity to simulate what would have happened in a real life scenario. You probably would have a snapshot policy in place, so by now that index would have been included in a snapshot stored in our repository. Let’s do it manually:

PUT _snapshot/orders-snapshots-repository/orders-older-than-365d?wait_for_completion=true

{

"indices": "orders-20200601",

"ignore_unavailable": true,

"include_global_state": false,

"metadata": {

"taken_by": "Snapshoter",

"taken_because": "Data older than 365 days will likely not be searched anymore"

}

}

Response:

{

"snapshot" : {

"snapshot" : "orders-older-than-365d",

"uuid" : "m2jcWCdRTryeqgC9qQcGUg",

"repository" : "orders-snapshots-repository",

"version_id" : 7150099,

"version" : "7.15.0",

"indices" : [

"orders-20200601"

],

"data_streams" : [ ],

"include_global_state" : false,

"metadata" : {

"taken_by" : "Snapshoter",

"taken_because" : "Data older than 365 days will likely not be searched anymore"

},

"state" : "SUCCESS",

"start_time" : "2021-10-05T12:57:55.821Z",

"start_time_in_millis" : 1633438675821,

"end_time" : "2021-10-05T12:57:55.821Z",

"end_time_in_millis" : 1633438675821,

"duration_in_millis" : 0,

"failures" : [ ],

"shards" : {

"total" : 1,

"failed" : 0,

"successful" : 1

},

"feature_states" : [ ]

}

}Now that the index is safely stored in our repository we can get rid of it (as it would probably have happened in a real life snapshot & restore policy):

DELETE orders-20200601

Just to recap, we still have our three indices allocated to nodes that in our experimental environment represent the hot, warm and cold data tiers, and our fourth index was deleted after we took a snapshot of it. Our goal now is to make this index available once again, only this time in our frozen tier and as a partially mounted index.

POST _snapshot/orders-snapshots-repository/orders-older-than-365d/_mount?wait_for_completion=true&storage=shared_cache

{

"index": "orders-20200601",

"index_settings": {

"index.number_of_replicas": 0,

"index.routing.allocation.include._tier_preference": "data_frozen"

},

"ignore_index_settings": [ "index.refresh_interval" ]

}

Response:

{

"snapshot" : {

"snapshot" : "orders-older-than-365d",

"indices" : [

"orders-20200601"

],

"shards" : {

"total" : 1,

"failed" : 0,

"successful" : 1

}

}

}Now let’s check our indices’ allocation one last time:

GET _cat/shards/orders*?v&s=index&h=index,node Response: index node orders-20200601 es04 orders-20210601 es03 orders-20211010 es02 orders-20211020 es01

Finally, all of our indices are allocated according to our allocation policy:

- orders-20211020 (today’s orders) → es01 → hot data tier

- orders-20211010 (this month’s orders) → es02 → warm data tier

- orders-20210601 (this year’s orders) → es03 → cold data tier

- orders-20200601 (last year’s and before orders) → es04 → frozen data tier

So to manually apply out allocation policy, here are the steps we took:

- We created an index template to set some common characteristics of our indices.

- We then created four indices representing different points in time.

- For three of them we managed to allocate to the appropriate hot/warm/cold tiers.

- For the one representing data from last year we took a snapshot and deleted it.

- Lastly, we mounted the index from the snapshot into our frozen tier.

In the next part we’ll see how to automate this whole process by using Elasticsearch’s Index Lifecycle Management feature.

Part 4 – Migrating data through tiers automatically

This approach is heavily based on Index Lifecycle Management (ILM). ILM organizes the lifecycle of your indices in five phases and lets you configure both the transition criteria between phases and a of actions that are to be executed when the index reaches that phase.

Currently you can set up an ILM policy composed of the following five phases:

- Hot: The index is actively being updated and queried.

- Warm: The index is no longer being updated but is still being queried.

- Cold: The index is no longer being updated and is queried infrequently. The information still needs to be searchable, but it’s okay if those queries are slower.

- Frozen: The index is no longer being updated and is queried rarely. The information still needs to be searchable, but it’s okay if those queries are extremely slow.

- Delete: The index is no longer needed and can safely be removed.

You can already see that ILM is definitely what we need to manage the flow of our data from tier to tier. For each phase you can define and configure a set of actions to be executed. You can take a look at the list below to see which actions are allowed in each phase and then follow the links to the official documentation to learn more about each action.

- Hot: Set Priority, Unfollow, Rollover, Read-Only, Shrink, Force Merge, Searchable Snapshot

- Warm: Set Priority, Unfollow, Read-Only, Allocate, Migrate, Shrink, Force Merge

- Cold: Set Priority, Unfollow, Read-Only, Allocate, Migrate, Searchable Snapshot, Freeze

- Frozen: Searchable Snapshot

- Delete: Wait For Snapshot, Delete

For the purpose of this guide, the Rollover, Migrate and Searchable Snapshot are the ones in which we are specially interested. In a nutshell, the Rollover action will create a new index every time a condition (age, number of documents, size) is reached. The Searchable Snapshot action will assure indices stored in your snapshots are mounted as needed (this is a premium feature and requires a subscription) and brought back online for queries to be solved.

Now, the Migrate action is the one we are really interested in. This action migrates indices to the tier that corresponds to that phase by setting the index.routing.allocation.include._tier_preference accordingly. ILM automatically injects the Migrate action in the warm and cold phases if no allocation options are specified with the Allocate action. Some other aspects of the Migrate action you should be aware of:

- If the cold phase defines a Searchable Snapshot action, the Migrate action will not be injected automatically because the index will be mounted directly on the target tier using the same index.routing.allocation.include._tier_preference infrastructure the migrate actions configures.

- In the warm phase the index’s index.routing.allocation.include._tier_preference setting is set to data_warm and data_hot which assures that the index will be allocated in one of those tiers respecting that order of precedence.

- In the cold phase the index’s index.routing.allocation.include._tier_preference setting is set to data_cold, data_warm and data_hot which assures that the index will be allocated in one of those tiers respecting that order of precedence.

- It is not allowed in the frozen phase, since this phase will mount the snapshot and set the index’s index.routing.allocation.include._tier_preference to data_frozen, data_cold, data_warm and data_hot and will try to allocate the index in one of those tiers respecting that order of precedence.

Our policy will be strictly guided by the age of our indices. We want them to migrate from tier to tier as they get older and older. Considering our proposed policy, an index should migrate from hot to warm after 1 day, from warm to cold after 30 days and from cold to frozen after 365 days. When it reaches the frozen phase the index could also be deleted (since it will still be searchable by mounting it from the snapshots). Just to make things interesting, let’s also add a rollover action in the hot phase of our ILM policy so a new write index is created every hour.

For the sake of this demo, let’s consider the following time adaptations (so we can see everything happening in less than 5 minutes instead of 365 days):

- Rollover every hour → every 30 seconds

- Migrate from hot to warm after 1 day → after 1 minute

- Migrate from warm to cold after 30 days →after 2 minutes

- Migrate from cold to frozen and then deleted after 365 days → after 3 minutes

The last piece of the puzzle is that we’re going to need snapshots in order to have a frozen tier where snapshotted data can be searched. We could easily take a snapshot manually, as we did in the last section, but here we are trying to automate things. Elasticsearch provides a way of taking snapshots automatically by configuring Snapshot Lifecycle Management policies. It’s very easy to do that, but unfortunately we cannot schedule an SLM policy to execute more frequently than 15 minutes, which will not work for our test scenario. We’ll work around this issue later, for now just run the following command so we have a snapshot policy in place to use in the delete phase of our ILM policy:

PUT _slm/policy/orders-snapshot-policy

{

"schedule": "0 * * * * ?",

"name": "<orders-snapshot-{now/d}>",

"repository": "orders-snapshots-repository",

"config": {

"indices": ["orders"]

},

"retention": {

"expire_after": "30d",

"min_count": 5,

"max_count": 50

}

}With all that being said, we’re now going to start by creating an ILM policy to manage our orders indices.

PUT _ilm/policy/orders-lifecycle-policy

{

"policy": {

"phases": {

"hot": {

"actions": {

"rollover": {

"max_age": "30s"

}

}

},

"warm": {

"min_age": "1m",

"actions": {}

},

"cold": {

"min_age": "2m",

"actions": {}

},

"frozen": {

"min_age": "3m",

"actions": {

"searchable_snapshot": {

"snapshot_repository": "orders-snapshots-repository"

}

}

},

"delete": {

"min_age": "3m",

"actions": {

"wait_for_snapshot": {

"policy": "orders-snapshot-policy"

},

"delete": {}

}

}

}

}

}Notice we didn’t include the Migrate action in our phases, but remember it is automatically injected by ILM in the warm and cold phases.

By default ILM checks every 10 minutes if there’s any action to execute. In our example we had to use very small intervals (seconds, minutes), so we can watch Elasticsearch move our indices along their lifecycle phases. Because of that we’ll also have to configure a transient cluster parameter reducing the interval in which ILM checks for the need of executing actions. Run the following command before moving on:

PUT _cluster/settings

{

"transient": {

"indices.lifecycle.poll_interval": "15s"

}

}Now that we have the ILM part of our demo set up, how do we use it? You can assign an ILM policy to an index by defining the index.lifecycle.name attribute as the ILM policy name in the index’s settings:

PUT orders-20211020/_settings

{

"index.lifecycle.name": "orders-lifecycle-policy”

}We want this policy to be applied for every order index that is created but we don’t want to have to do it manually. The proper way of solving this is to make use of an index template. With an index template you can define some common settings and mappings that should be applied to every index that is created and that the name matches a pattern defined in the template. That’s exactly what we need because if you take a look at the hot phase of our ILM policy you’ll see that it will execute a Rollover action. This action creates a new write index every time a condition (age, number of documents or size) is reached. This new index needs to have characteristics such as its lifecycle policy and its mappings set the same way as the other indices created before. Since the newly created index will have its name following a pattern (orders-*) it’s easy to create an index template that will make sure all common characteristics are present in every index rolled over.

To create our index template we first need to create the components of the template and then use them when we finally create the template. Run the commands below to create settings and mappings components:

PUT _component_template/orders-settings

{

"template": {

"settings": {

"index.lifecycle.name": "orders-lifecycle-policy",

"number_of_shards": 1,

"number_of_replicas": 0

}

},

"_meta": {

"description": "Settings for orders indices"

}

}

PUT _component_template/orders-mappings

{

"template": {

"mappings": {

"properties": {

"@timestamp": {

"type": "date",

"format": "date_optional_time||epoch_millis"

},

"client_id": {

"type": "integer"

},

"created_at": {

"type": "date"

}

}

}

},

"_meta": {

"description": "Mappings for orders indices"

}

}Now run the following command to create the index template for the orders indices that will get created by the data stream:

PUT _index_template/orders

{

"index_patterns": ["orders"],

"data_stream": { },

"composed_of": [ "orders-settings", "orders-mappings" ],

"priority": 500,

"_meta": {

"description": "Template for orders data stream"

}

}We are good to go. We have our index lifecycle policy created and configured in an index template that will be applied to every index with the name following the pattern orders*. It’s time to finally create our index and check if all this wiring works.

Here we have two options. The first one is to create the index manually with its name matching the pattern ^.*-\d+$ (for example orders-000001) and then create an alias pointing to it (orders → orders-000001). So all your applications would use only the alias and the ILM policy would be in charge of rolling the underneath indices.

The second option is to use data streams, which were introduced in Elasticsearch 7.9 and makes it easier to implement this pattern where you have append-only data that will be spread across multiple indices and should be made available to the clients as a single named resource. When you create a data stream, Elasticsearch will actually create a hidden index to store the documents.

We’ll go with the second one. Remember we already have an ILM policy in place and it is being executed by Elasticsearch every 15 seconds. Once we create our data stream things will start to move fast. Since we want to follow all steps, let’s keep a terminal open watching what’s happening with our indices:

clear ; watch -d curl -s 'http://localhost:9200/_cat/shards/orders*\?v\&s=index\&h=index,node'

Now, finally, create a data stream for the orders index.

PUT _data_stream/orders

Here is a work around for our SLM policy. We cannot wait 15 minutes to have a snapshot taken, so what we can do is take it manually as soon as we have the first index created through our data stream.

In a more realistic scenario this wouldn’t be necessary, we’d let the SLM policy take care of snapshots for us, but since our fictitious ILM policy aging constraints are in terms of minutes (and even seconds), we’ll have to go this way. We’re going to need snapshots otherwise ILM is not going to be able to move our indices to the frozen phase, since it depends on that.

Run the following command to create a simple snapshot, which should be enough:

PUT _snapshot/orders-snapshots-repository/<orders-snapshot-{now/d}>?wait_for_completion=true

{

"indices": "orders",

"ignore_unavailable": true,

"include_global_state": false,

"metadata": {

"taken_by": "Snapshoter",

"taken_because": "Simulating what the SLM policy would do"

}

}Now we can finally watch the terminal and see Elasticsearch applying our ILM policy. You should see the following events happening:

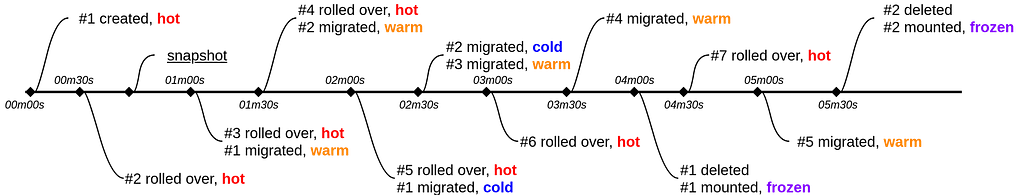

(00m00s: the data stream immediately creates write index that is allocated in the hot tier) index node .ds-orders-2021.10.07-000001 es01 (00m30s: the write index backing the data stream is rolled over, also allocated in the hot tier) index node .ds-orders-2021.10.07-000001 es01 .ds-orders-2021.10.07-000002 es01 *** Here we perform a snapshot manually, simulating what would've happened automatically through our SLM policy, but that we couldn't rely on for the purpose of this demo because the minimal interval you can schedule it is 15 minutes *** (01m00s: index is rolled over one more time) index node .ds-orders-2021.10.07-000001 es01 .ds-orders-2021.10.07-000002 es01 .ds-orders-2021.10.07-000003 es01 (01m00s: also, the oldest index is migrated to the warm tier) index node .ds-orders-2021.10.07-000001 es02 .ds-orders-2021.10.07-000002 es01 .ds-orders-2021.10.07-000003 es01 (01m30s: one more roll over) index node .ds-orders-2021.10.07-000001 es02 .ds-orders-2021.10.07-000002 es01 .ds-orders-2021.10.07-000003 es01 .ds-orders-2021.10.07-000004 es01 (01m30s: the second index, now 1 minute old, is migrated to the warm tier) index node .ds-orders-2021.10.07-000001 es02 .ds-orders-2021.10.07-000002 es02 .ds-orders-2021.10.07-000003 es01 .ds-orders-2021.10.07-000004 es01 (02m00s: one more roll over and the first index, now 2 minutes old, is migrated to the cold tier) index node .ds-orders-2021.10.07-000001 es03 .ds-orders-2021.10.07-000002 es02 .ds-orders-2021.10.07-000003 es01 .ds-orders-2021.10.07-000004 es01 .ds-orders-2021.10.07-000005 es01 (02m30s: the second index, now 2 minutes old, is migrated to the cold tier and the third index, now 1 minute old, is migrated to the warm tier) index node .ds-orders-2021.10.07-000001 es03 .ds-orders-2021.10.07-000002 es03 .ds-orders-2021.10.07-000003 es02 .ds-orders-2021.10.07-000004 es01 .ds-orders-2021.10.07-000005 es01 (03m00s: one more roll over) index node .ds-orders-2021.10.07-000001 es03 .ds-orders-2021.10.07-000002 es03 .ds-orders-2021.10.07-000003 es02 .ds-orders-2021.10.07-000004 es01 .ds-orders-2021.10.07-000005 es01 .ds-orders-2021.10.07-000006 es01 (03m30s: the fourth index, now 1 minute old, is migrated to the warm tier) index node .ds-orders-2021.10.07-000001 es03 .ds-orders-2021.10.07-000002 es03 .ds-orders-2021.10.07-000003 es02 .ds-orders-2021.10.07-000004 es02 .ds-orders-2021.10.07-000005 es01 .ds-orders-2021.10.07-000006 es01 (04m00s: the first index, now 4 minutes old, is deleted and the snapshot where it is backed up is mounted for search in the frozen tier) index node .ds-orders-2021.10.07-000002 es03 .ds-orders-2021.10.07-000003 es03 .ds-orders-2021.10.07-000004 es02 .ds-orders-2021.10.07-000005 es01 .ds-orders-2021.10.07-000006 es01 partial-.ds-orders-2021.10.07-000001 es04 (04m30s: one more roll over) index node .ds-orders-2021.10.07-000002 es03 .ds-orders-2021.10.07-000003 es03 .ds-orders-2021.10.07-000004 es02 .ds-orders-2021.10.07-000005 es01 .ds-orders-2021.10.07-000006 es01 .ds-orders-2021.10.07-000007 es01 partial-.ds-orders-2021.10.07-000001 es04 (05m00s: the fifth index, now 1 minute old, is migrated to the warm tier) index node .ds-orders-2021.10.07-000002 es03 .ds-orders-2021.10.07-000003 es03 .ds-orders-2021.10.07-000004 es02 .ds-orders-2021.10.07-000005 es02 .ds-orders-2021.10.07-000006 es01 .ds-orders-2021.10.07-000007 es01 partial-.ds-orders-2021.10.07-000001 es04 (05m30s: the second index, now 4 minutes old, is deleted and the snapshot where it is backed up is mounted for search in the frozen tier) index node .ds-orders-2021.10.07-000003 es03 .ds-orders-2021.10.07-000004 es03 .ds-orders-2021.10.07-000005 es02 .ds-orders-2021.10.07-000006 es01 .ds-orders-2021.10.07-000007 es01 partial-.ds-orders-2021.10.07-000001 es04 partial-.ds-orders-2021.10.07-000002 es04 And so on...

If you prefer a more visual representation of that chain of events, it looks like this:

Part 5 – How to optimize your cluster architecture to reduce Elasticsearch costs

Watch the video below to learn how to save money on your deployment by optimizing your cluster architecture.

Summary

To automatically apply our allocation policy, we:

- Created a Snapshot Lifecycle Management (SLM) policy that automatically creates a snapshot of our data as scheduled.

- Created an Index Lifecycle Management (ILM) policy that will execute defined actions for each of the five phases for which our indices will pass throughout their existence, from creation to deletion.

- Defined an index template that, among other things, sets our ILM policy as the one to be used for managing the lifecycle of all indices that are created and with a name that matches the pattern defined in the index template, so we don’t need to do this manually.

- Finally we created a data streams and watched the backing indices being rolled over, migrated, snapshotted and deleted as our ILM policy executed the defined actions every time it identified that an index’s age reached the configured threshold.