Briefly, this error occurs when Elasticsearch is unable to parse a specific field in a document due to a mismatch between the actual data type and the expected data type. This could be due to incorrect data formatting or a wrong mapping. To resolve this, you can either correct the data type in the document or update the mapping to match the data type. Additionally, you can use the “ignore_malformed” option to ignore such errors, but this could lead to data loss or incorrect search results.

Before you dig into reading this guide, have you tried asking OpsGPT what this log means? You’ll receive a customized analysis of your log.

Try OpsGPT now for step-by-step guidance and tailored insights into your Elasticsearch/OpenSearch operation.

This guide will help you understand the origin of the log “failed to parse field [field] of type [type] in document with id [id]”, why it happens, with which concepts of Elasticsearch it is related and then give you some ideas on how to debug and avoid it.

Overview

We index documents in Elasticsearch by providing data as JSON objects to some of its indexing APIs. JSON objects are simple representations of data, and support only the following data types: number, string, boolean, array, object and null. When Elasticsearch receives a document request body for indexing, it needs to parse it first. Sometimes it also needs to transform some of the fields into more complex data types prior to indexing.

During this parsing process, any value provided that does not comply with the field’s type constraints will throw an error and prevent the document from being indexed.

What it means

In a nutshell, this error just means that you provided a value which could not be parsed by Elasticsearch for the field’s type and thus it could not index the document.

Why it occurs

There are a multitude of reasons why this error can happen, but they are always related to what you provided as a value for a field and what the constraints for that field type are. This should become more clear when we explain how to replicate the error, but before that, let’s review some concepts related to field types and mapping.

Field types

Every field in an index has a specific type. Even if you didn’t define it explicitly, Elasticsearch will infer a field type and configure that field with it. Currently, Elasticsearch supports something like 30+ different field types, from the ones you would probably expect, like boolean, date, text, numeric and keyword, to some very specific ones, like IP, geo_shape, search_as_you_type, histogram and the list goes on.

Each type has its own set of rules, constraints, thresholds and configuration parameters. You should always check the type’s documentation page to make sure the values you will index are going to comply with the field type’s constraints.

Mapping

Mapping is the act of defining the type for the index’s fields. This can be done in two ways:

- Dynamically: you will let Elasticsearch infer and choose the type of the field for you, no need to explicitly define them beforehand.

- Explicitly: you will explicitly define the field’s type before indexing any value to it.

Dynamic mapping is really great when you are starting a project. You can index your dataset without worrying too much about the specifics of each field, letting Elasticsearch handle that for you. You map your fields dynamically by just indexing values to a non-existent index, or to a non existent field:

PUT test/_doc/1

{

"non_existent_field_1": "some text",

"non_existent_field_2": 123,

"non_existent_field_3": true

}You can then take a look at how Elasticsearch mapped each of those fields:

GET test/_mapping

Response:

{

"test" : {

"mappings" : {

"properties" : {

"non_existent_field_1" : {

"type" : "text",

"fields" : {

"keyword" : {

"type" : "keyword",

"ignore_above" : 256

}

}

},

"non_existent_field_2" : {

"type" : "long"

},

"non_existent_field_3" : {

"type" : "boolean"

}

}

}

}

}The non_existent_field_3 was mapped to a boolean, which probably is fine, since the value we provided (true) was not ambiguous. But notice that the non_existent_field_1 field was mapped both as text and as a sub-field of type keyword. The difference between these two field types is that the first one is analyzed, meaning it will be processed through Elasticsearch’s analysis pipeline (breaking the text into tokens, removing stopwords, lowercasing the generated tokens and any other operation defined by Elasticsearch’s standard analyzer), and the second one will be indexed as it is. This may or may not be what you want.

In many cases you are either indexing a piece of text, for which you want to provide full-text search capabilities, or you want to just filter by a given keyword, such as a category or department name. Elasticsearch can’t know what your use case is, so that’s why it mapped that field twice.

The non_existent_field_2 is another case for which Elasticsearch had to choose one of the many numeric field types because it can’t know what your use case is. In this case, it chose the long field type. The long field type has a maximum value of 2^63-1, but imagine if your field only stored a person’s age, or a score from 0 to 10. Using a long field type would be a waste of space, so you should always try to pick the type that makes the most sense for the data the field is supposed to contain.

Another example of when dynamically mapping your index can cause you some problems:

PUT test1/_doc/1

{

"my_ip_address": "179.152.62.82",

"my_location": "-30.0346471,-51.2176584"

}Looks like we’d like to index an IP address and a geo point, right?

GET test1/_mapping

Response:

{

"test1" : {

"mappings" : {

"properties" : {

"my_ip_address" : {

"type" : "text",

"fields" : {

"keyword" : {

"type" : "keyword",

"ignore_above" : 256

}

}

},

"my_location" : {

"type" : "text",

"fields" : {

"keyword" : {

"type" : "keyword",

"ignore_above" : 256

}

}

}

}

}

}

}No matter the value you provided for the field, if it is a string, Elasticsearch will map it as a string (to a text field with a keyword sub-field, same as the non_existent_field_1 in the previous example).

That’s usually when you start to realize that in most cases you definitely prefer to map your fields explicitly. Here is how you could create an index and explicitly map the index’s fields to types that could make more sense:

PUT test

{

"mappings": {

"properties": {

"description": { "type": "text" },

"username": { "type": "keyword" },

"age": { "type": "byte" },

"location": { "type": "geo_point" },

"source_ip": { "type": "ip" }

}

}

}Replicating the error

This error is actually quite simple to replicate. You only need to make sure you are somehow not respecting Elasticsearch’s constraints for the value of a given field type and then you will most certainly receive the message.

Let’s try to replicate the error in several ways. First of all, we need an index. We will map the fields of this index explicitly so we can define some very specific field types we’ll try to break. Below you can find the command that will create our index with three different fields: a boolean field, a numeric (byte) field and an IP field.

PUT test

{

"mappings": {

"properties": {

"boolean_field": { "type": "boolean" },

"byte_field": { "type": "byte" },

"ip_field": { "type": "ip" }

}

}

}Now let’s start to break things. First, let’s see if we can index a document with only a value being defined for our boolean field. You will find below our request and the error message we got back and extracted from the response object.

PUT test/_doc/1

{

"boolean_field": "off"

}

Response:

{

"error" : {

"root_cause" : [

{

"type" : "mapper_parsing_exception",

"reason" : "failed to parse field [boolean_field] of type [boolean] in document with id '1'. Preview of field's value: 'off'"

}

],

"type" : "mapper_parsing_exception",

"reason" : "failed to parse field [boolean_field] of type [boolean] in document with id '1'. Preview of field's value: 'off'",

"caused_by" : {

"type" : "illegal_argument_exception",

"reason" : "Failed to parse value [off] as only [true] or [false] are allowed."

}

},

"status" : 400

}

PUT test/_doc/1

{

"boolean_field": "1"

}

Response:

{

"error" : {

"root_cause" : [

{

"type" : "mapper_parsing_exception",

"reason" : "failed to parse field [boolean_field] of type [boolean] in document with id '1'. Preview of field's value: '1'"

}

],

"type" : "mapper_parsing_exception",

"reason" : "failed to parse field [boolean_field] of type [boolean] in document with id '1'. Preview of field's value: '1'",

"caused_by" : {

"type" : "illegal_argument_exception",

"reason" : "Failed to parse value [1] as only [true] or [false] are allowed."

}

},

"status" : 400

}

PUT test/_doc/1

{

"boolean_field": "True"

}

Response:

{

"error" : {

"root_cause" : [

{

"type" : "mapper_parsing_exception",

"reason" : "failed to parse field [boolean_field] of type [boolean] in document with id '1'. Preview of field's value: 'True'"

}

],

"type" : "mapper_parsing_exception",

"reason" : "failed to parse field [boolean_field] of type [boolean] in document with id '1'. Preview of field's value: 'True'",

"caused_by" : {

"type" : "illegal_argument_exception",

"reason" : "Failed to parse value [True] as only [true] or [false] are allowed."

}

},

"status" : 400

}It seemingly makes sense the request would fail for the first value (“off”), but why did it fail for the other two? “True” and “1” both look very “boolean” and those values are actually accepted as valid boolean values in some languages (Python and Javascript, for example). Shouldn’t Elasticsearch be able to coerce those values into its boolean equivalent? Maybe it should (and it was the case up until version 5.3), maybe it shouldn’t, but that doesn’t change the fact that it doesn’t, and the documentation for the boolean field type is very clear on which values are valid for this type: false, “false”, true, “true” and and empty string, which will be considered a false value.

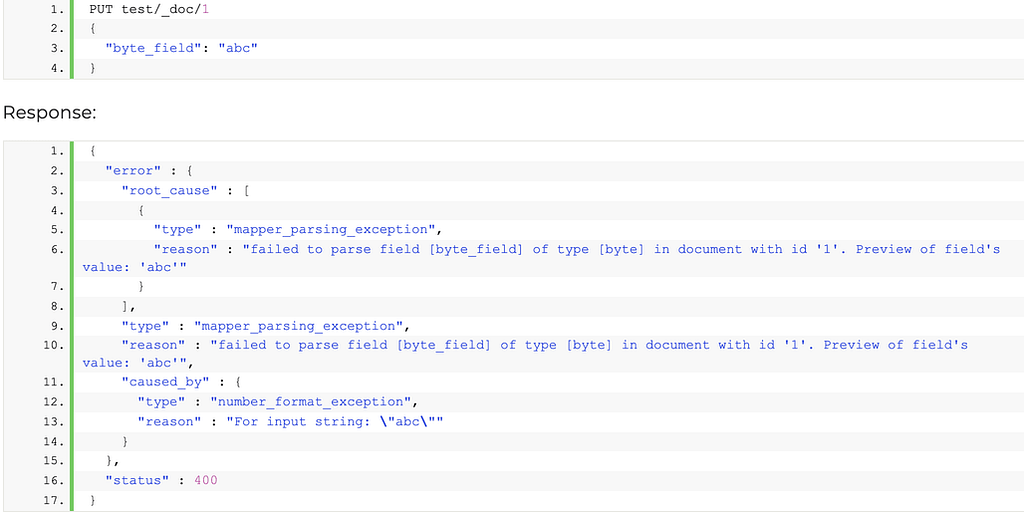

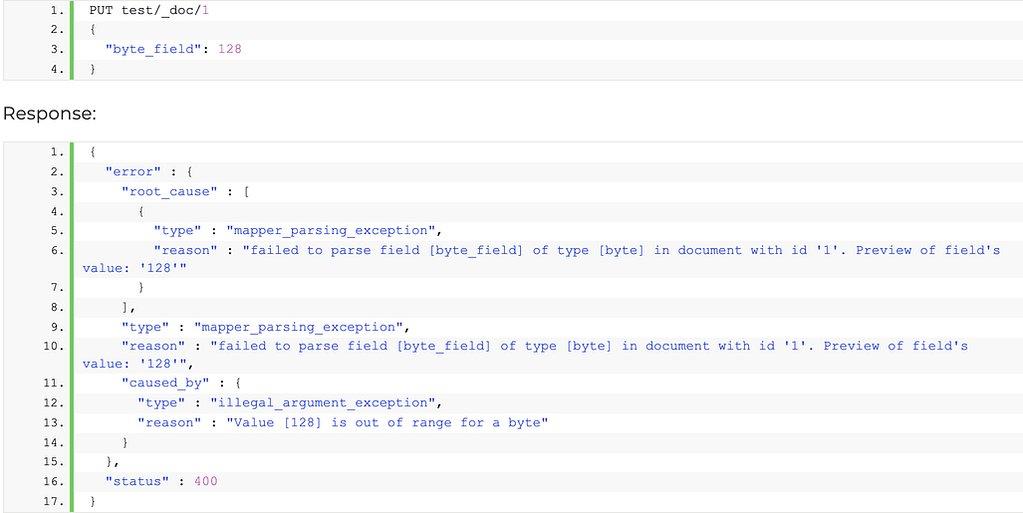

Now let’s break the numeric field on our index. Elasticsearch supports many numeric field types, each one with its own thresholds and characteristics. The byte field type is described as a signed 8-bit integer with a minimum value of -128 and a maximum value of 127. So, it’s a number and it has thresholds. We should be able to easily break it in at least the two ways shown below.

As you can see, the error response includes not only the root cause (failed to parse the field), but also some valuable information that can help debug. For the first request the value could not be parsed because it did not contain a valid numeric value (number_format_exception) and for the second one the problem was that the value provided, even though it was a valid numeric one, was out of the numeric field type range.

Moving on, let’s work with the IP field. At this point I hope it is very clear that in order to not get the error message, we should comply with the field’s type specific constraints. In this case, the documentation for the IP field type states that we can provide either an IPv4 or an IPv6 value. When we provide a value that is not a valid IPv4 address, this is what happens:

PUT test/_doc/1

{

"ip_field": "355.255.255.255"

}

Response:

{

"error" : {

"root_cause" : [

{

"type" : "mapper_parsing_exception",

"reason" : "failed to parse field [ip_field] of type [ip] in document with id '1'. Preview of field's value: '355.255.255.255'"

}

],

"type" : "mapper_parsing_exception",

"reason" : "failed to parse field [ip_field] of type [ip] in document with id '1'. Preview of field's value: '355.255.255.255'",

"caused_by" : {

"type" : "illegal_argument_exception",

"reason" : "'355.255.255.255' is not an IP string literal."

}

},

"status" : 400

}The first part (355) is out of range for what is allowed in the IPv4 standard. Seemingly, the root cause of the failed request is that Elasticsearch was unable to parse the provided field value, but, as you can see, Elasticsearch will usually provide a more specific reason for the parsing to have failed.

How to resolve it

At this point it should be clear that the cause for this error message is actually very simple: some value was provided that could not be parsed by the field’s type constraints. Elasticsearch tries to help you by providing information on which field caused the error, what type it has and which value was provided that could not be parsed. In some cases it goes a step further and specifies what caused the error.

Here are some things you could check if you are getting this error:

- Do you know what the constraints for that specific field type are? Double check the documentation.

- Perhaps there’s a field type that will work a little better to accommodate the accepted range of that field. (Maybe you should remap your byte field to a short.)

- Are the values being generated by multiple sources? Maybe one of them is not sanitizing the data before storing it (for example, a numeric field in the UI that does not validate the input).

Anyway, chances are the solution will most definitely involve finding out which source is generating invalid values for a field and either solving it there, or changing that field’s mapping to accommodate the multitude of incoming values.

Log context

It might help knowing where in Elasticsearch code this error log is thrown. If we take a look at the FieldMapper.java class for the codebase for 7.14 version of Elasticsearch we can easily see that it is thrown by the parse() method whenever it can’t parse the value of the field, considering the field’s actual type. Here is a snippet with the parse method of the Field Mapper class:

public void parse(ParseContext context) throws IOException {

try {

if (hasScript) {

throw new IllegalArgumentException("Cannot index data directly into a field with a [script] parameter");

}

parseCreateField(context);

} catch (Exception e) {

String valuePreview = "";

try {

XContentParser parser = context.parser();

Object complexValue = AbstractXContentParser.readValue(parser, HashMap::new);

if (complexValue == null) {

valuePreview = "null";

} else {

valuePreview = complexValue.toString();

}

} catch (Exception innerException) {

throw new MapperParsingException("failed to parse field [{}] of type [{}] in document with id '{}'. " +

"Could not parse field value preview,",

e, fieldType().name(), fieldType().typeName(), context.sourceToParse().id());

}

throw new MapperParsingException("failed to parse field [{}] of type [{}] in document with id '{}'. " +

"Preview of field's value: '{}'", e, fieldType().name(), fieldType().typeName(),

context.sourceToParse().id(), valuePreview);

}

multiFields.parse(this, context);

}If you are really interested in digging into how the FieldMapper.parse() method happens to throw this error log message, one good place to check out is the unit tests files. There is one for each type of field and you can find them all in

elasticsearch/server/src/test/java/org/elasticsearch/index/mapper/*FieldMapperTests.java.

For instance, if we take a look at the unit tests for the boolean field type, we will find the test function below, where it expects that the field mapper parser fails for all the given values (accepted values for a boolean type are false, “false”, true, “true” and an empty string):

public void testParsesBooleansStrict() throws IOException {

DocumentMapper defaultMapper = createDocumentMapper(fieldMapping(this::minimalMapping));

// omit "false"/"true" here as they should still be parsed correctly

for (String value : new String[]{"off", "no", "0", "on", "yes", "1"}) {

MapperParsingException ex = expectThrows(MapperParsingException.class,

() -> defaultMapper.parse(source(b -> b.field("field", value))));

assertEquals("failed to parse field [field] of type [boolean] in document with id '1'. " +

"Preview of field's value: '" + value + "'", ex.getMessage());

}

}One other example, in the unit tests for the keyword field type there’s a test that expects the field mapper parser to fail for null values:

public void testParsesKeywordNullStrict() throws IOException {

DocumentMapper defaultMapper = createDocumentMapper(fieldMapping(this::minimalMapping));

Exception e = expectThrows(

MapperParsingException.class,

() -> defaultMapper.parse(source(b -> b.startObject("field").nullField("field_name").endObject()))

);

assertEquals(

"failed to parse field [field] of type [keyword] in document with id '1'. " + "Preview of field's value: '{field_name=null}'",

e.getMessage()

);

}Finally, you can also take a look at the DocumentParserTests.java, where, among other things, you will find a test function that synthesizes the essence of this error log message. Here it expects the parser to fail when a boolean value is being assigned to a long field type and also when a string value is being assigned to a boolean field type.

public void testUnexpectedFieldMappingType() throws Exception {

DocumentMapper mapper = createDocumentMapper(mapping(b -> {

b.startObject("foo").field("type", "long").endObject();

b.startObject("bar").field("type", "boolean").endObject();

}));

{

MapperException exception = expectThrows(MapperException.class,

() -> mapper.parse(source(b -> b.field("foo", true))));

assertThat(exception.getMessage(), containsString("failed to parse field [foo] of type [long] in document with id '1'"));

}

{

MapperException exception = expectThrows(MapperException.class,

() -> mapper.parse(source(b -> b.field("bar", "bar"))));

assertThat(exception.getMessage(), containsString("failed to parse field [bar] of type [boolean] in document with id '1'"));

}

}You can probably find many more examples like these in the remaining unit test files. Here is a complete list of Elastic’s unit tests for the field mapping/parsing chunk of Elasticsearch’s code. Since the actual cause of the error you are getting could sometimes be a little unclear, perhaps taking a look at the suite of tests Elastic runs against the specific field type could give you some hints on what could be causing the parsing of the value to fail.

- AllFieldMapperTests.java

- BinaryFieldMapperTests.java

- BooleanFieldMapperTests.java

- BooleanScriptMapperTests.java

- ByteFieldMapperTests.java

- CompletionFieldMapperTests.java

- CopyToMapperTests.java

- DateScriptMapperTests.java

- DocCountFieldMapperTests.java

- DocumentMapperTests.java

- DoubleFieldMapperTests.java

- DoubleScriptMapperTests.java

- FieldAliasMapperTests.java

- FieldNamesFieldMapperTests.java

- FloatFieldMapperTests.java

- GeoPointFieldMapperTests.java

- GeoPointScriptMapperTests.java

- GeoShapeFieldMapperTests.java

- HalfFloatFieldMapperTests.java

- IdFieldMapperTests.java

- IndexFieldMapperTests.java

- IntegerFieldMapperTests.java

- IpFieldMapperTests.java

- IpRangeFieldMapperTests.java

- IpScriptMapperTests.java

- KeywordFieldMapperTests.java

- KeywordScriptMapperTests.java

- LegacyGeoShapeFieldMapperTests.java

- LegacyTypeFieldMapperTests.java

- LongFieldMapperTests.java

- LongScriptMapperTests.java

- MultiFieldCopyToMapperTests.java

- NestedObjectMapperTests.java

- NumberFieldMapperTests.java

- ObjectMapperTests.java

- ParametrizedMapperTests.java

- PathMapperTests.java

- RangeFieldMapperTests.java

- RootObjectMapperTests.java

- RoutingFieldMapperTests.java

- ShortFieldMapperTests.java

- SourceFieldMapperTests.java

- TextFieldMapperTests.java

- TypeFieldMapperTests.java

- WholeNumberFieldMapperTests.java

Overview

In Elasticsearch, an index (plural: indices) contains a schema and can have one or more shards and replicas. An Elasticsearch index is divided into shards and each shard is an instance of a Lucene index.

Indices are used to store the documents in dedicated data structures corresponding to the data type of fields. For example, text fields are stored inside an inverted index whereas numeric and geo fields are stored inside BKD trees.

Examples

Create index

The following example is based on Elasticsearch version 5.x onwards. An index with two shards, each having one replica will be created with the name test_index1

PUT /test_index1?pretty

{

"settings" : {

"number_of_shards" : 2,

"number_of_replicas" : 1

},

"mappings" : {

"properties" : {

"tags" : { "type" : "keyword" },

"updated_at" : { "type" : "date" }

}

}

}List indices

All the index names and their basic information can be retrieved using the following command:

GET _cat/indices?v

Index a document

Let’s add a document in the index with the command below:

PUT test_index1/_doc/1

{

"tags": [

"opster",

"elasticsearch"

],

"date": "01-01-2020"

}Query an index

GET test_index1/_search

{

"query": {

"match_all": {}

}

}Query multiple indices

It is possible to search multiple indices with a single request. If it is a raw HTTP request, index names should be sent in comma-separated format, as shown in the example below, and in the case of a query via a programming language client such as python or Java, index names are to be sent in a list format.

GET test_index1,test_index2/_search

Delete indices

DELETE test_index1

Common problems

- It is good practice to define the settings and mapping of an Index wherever possible because if this is not done, Elasticsearch tries to automatically guess the data type of fields at the time of indexing. This automatic process may have disadvantages, such as mapping conflicts, duplicate data and incorrect data types being set in the index. If the fields are not known in advance, it’s better to use dynamic index templates.

- Elasticsearch supports wildcard patterns in Index names, which sometimes aids with querying multiple indices, but can also be very destructive too. For example, It is possible to delete all the indices in a single command using the following commands:

DELETE /*

To disable this, you can add the following lines in the elasticsearch.yml:

action.destructive_requires_name: true

Document in Elasticsearch

What is an Elasticsearch document?

While an SQL database has rows of data stored in tables, Elasticsearch stores data as multiple documents inside an index. This is where the analogy must end however, since the way that Elasticsearch treats documents and indices differs significantly from a relational database.

For example, documents could be:

- Products in an e-commerce index

- Log lines in a data logging application

- Invoice lines in an invoicing system

Document fields

Each document is essentially a JSON structure, which is ultimately considered to be a series of key:value pairs. These pairs are then indexed in a way that is determined by the document mapping. The mapping defines the field data type as text, keyword, float, time, geo point or various other data types.

Elasticsearch documents are described as schema-less because Elasticsearch does not require us to pre-define the index field structure, nor does it require all documents in an index to have the same structure. However, once a field is mapped to a given data type, then all documents in the index must maintain that same mapping type.

Each field can also be mapped in more than one way in the index. This can be useful because we may want a keyword structure for aggregations, and at the same time be able to keep an analysed data structure which enables us to carry out full text searches for individual words in the field.

For a full discussion on mapping please see here.

Document source

An Elasticsearch document _source consists of the original JSON source data before it is indexed. This data is retrieved when fetched by a search query.

Document metadata

Each document is also associated with metadata, the most important items being:

_index – The index where the document is stored

_id – The unique ID which identifies the document in the index

Documents and index architecture

Note that different applications could consider a “document” to be a different thing. For example, in an invoicing system, we could have an architecture which stores invoices as documents (1 document per invoice), or we could have an index structure which stores multiple documents as “invoice lines” for each invoice. The choice would depend on how we want to store, map and query the data.

Examples:

Creating a document in the user’s index:

POST /users/_doc

{

"name" : "Petey",

"lastname" : "Cruiser",

"email" : "petey@gmail.com"

}In the above request, we haven’t mentioned an ID for the document so the index operation generates a unique ID for the document. Here _doc is the type of document.

POST /users/_doc/1

{

"name" : "Petey",

"lastname" : "Cruiser",

"email" : "petey@gmail.com"

}In the above query, the document will be created with ID 1.

You can use the below ‘GET’ query to get a document from the index using ID:

GET /users/_doc/1

Below is the result, which contains the document (in _source field) as metadata:

{

"_index": "users",

"_type": "_doc",

"_id": "1",

"_version": 1, "_seq_no": 1, "_primary_term": 1,

"found": true,

"_source": {

"name": "Petey",

"lastname": "Cruiser",

"email": "petey@gmail.com"

}

}Notes

Starting version 7.0 types are deprecated, so for backward compatibility on version 7.x all docs are under type ‘_doc’, starting 8.x type will be completely removed from ES APIs.

Log Context

Log “failed to parse field [{}] of type [{}] in document with id ‘{}’.” class name is FieldMapper.java. We extracted the following from Elasticsearch source code for those seeking an in-depth context :

valuePreview = "null";

} else {

valuePreview = complexValue.toString();

}

} catch (Exception innerException) {

throw new MapperParsingException("failed to parse field [{}] of type [{}] in document with id '{}'. " +

"Could not parse field value preview;";

e; fieldType().name(); fieldType().typeName(); context.sourceToParse().id());

} throw new MapperParsingException("failed to parse field [{}] of type [{}] in document with id '{}'. " +

[ratemypost]